Measuring the Perception of Latency with a Haptic Glove

July 18, 2019

This blog is cross-posted from Tech @ Facebook. Go here for the full post and for more information on research happening at Facebook.

A little over a year ago I joined Facebook Reality Labs. I get to work with world class experts to invent new technology beyond the bleeding edge.

Unfortunately, one thing I lost was the ability to blog about my work. Everything I work on is top secret. Today that changes.

A Programmer's Tale

This post tells a story. I'll talk about a software engineering project I worked on. How that led to an unexpected discovery. Which caused us to run a user study. That produced some novel findings.

I'm just a regular programmer. My blog targets a 3rd grade reading level. This post is a casual introduction into some of the lab's work.

Meanwhile, my co-worker Max Di Luca recently published a paper titled “Perceptual Limits of Visual-Haptic Simultaneity in Virtual Reality Interactions”. If you're looking for a PhD deep cut then I strongly encourage you to read his paper.

Haptic Glove Demo

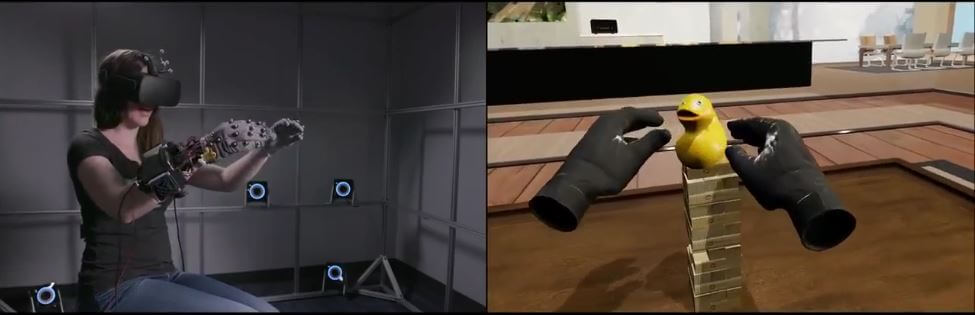

One of our research projects is a haptic glove. Michael Abrash unveiled it with a brief video at Oculus Connect 5

In this video a user is wearing a haptic glove. Her hands are tracked and fully articulated in virtual reality. When she touches a virtual block tower with her virtual hand the real glove produces haptic feedback.

In the early versions of this demo there was a problem. Lag. There was obvious lag on the audio and haptic feedback. Users saw their hand impact the block tower before they felt or heard it.

I love working on optimization problems. One of the first tasks is to measure. For pure software problems this is easy. There are a variety of tools for profiling code performance.

Measuring hardware latency is more difficult. Fortunately there is some precedent to build on.

Video Game Input Latency

Video game developers have been measuring input latency for years. The difference between a game that “feels” good and one that “feels” bad is often hard to quantify lag.

Input latency is a simple concept. When you press a button, how long does it take for Mario to start jumping? How many milliseconds until the pixels on a TV change?

A quick and dirty way to measure is with a smartphone. You can record a slow-motion video at 240fps and count frames.

A better method involves a custom controller. The controller is wired to a board with LEDs. The LEDs light up when a button signal is detected. Combined with high speed video this enables a more precise measurement.

An even better solution is to modify the game to render a black square that flashes white when a button is pressed. A light sensor can be attached to the display to give a very precise measurement.

Source: BenHeck.com

End-to-End Latency

Now we know how to measure latency. Great!

Not quite. The method described measures button-to-photon visual latency. We care about latency to a haptic actuator sewn inside a glove. There's nothing to point a camera at.

Even worse, we don't have a button. We're using a state of the art hand tracking system. The haptic response occurs when the physics simulation detects a collision with the virtual hands.

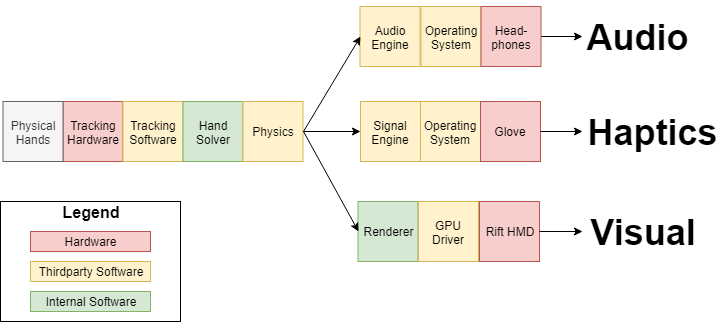

We could isolate the haptics. But what we care about is the end-to-end latency of the demo experience. This includes the hardware tracking system, internal software, third party software, device drivers, and output hardware.

Here's our measurement solution.

We align a physical table with the virtual table. When the user's physical hand hits the physical table their virtual hand will also hit the virtual table.

Then we use two microphones. One pointed at the table which records the sound of physical impact. The second is a contact mic inside the glove which records the haptic response. Both mics feed into a field recorder which synchronizes the streams.

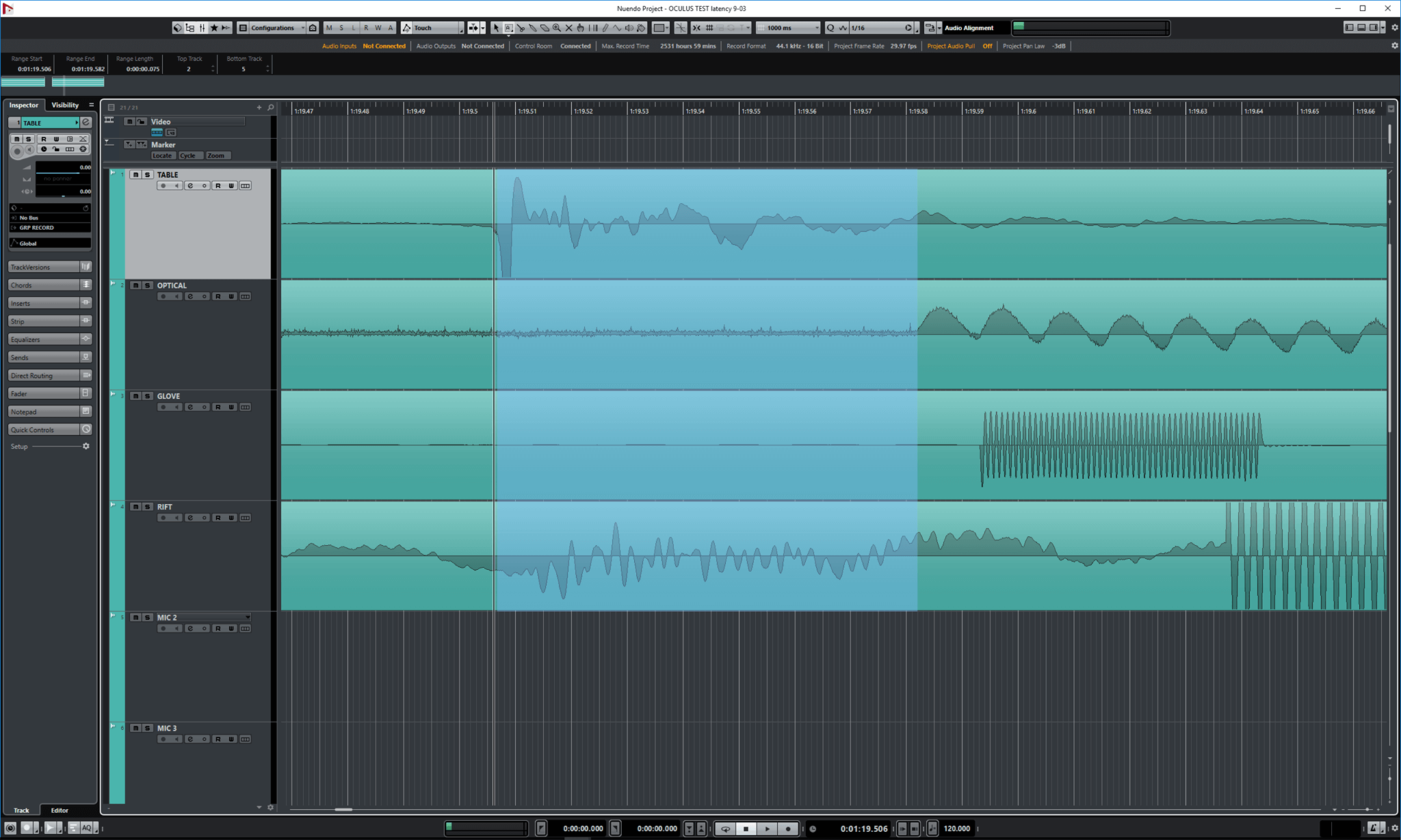

Instead of counting video frames we open the audio streams in Nuendo. We annotate the streams by hand then compute delta time which is our end-to-end latency.

Our haptic latency measurement came in at a whopping 300 milliseconds. Everyone knew it was bad. But this was the first time we had a concrete number.

About 200 milliseconds of this time came from third-party software. Our projects are non-conventional. Haptic devices wish they could run at 1000 Hz. This leads to all kinds of weird edge cases. Once we knew where the problem was we were able to tweak behavior to avoid the middleware spiral of death.

Our software pipeline runs through multiple subsystems. They run on several threads and update at different rates.

Threading issues led to wasted cycled and, at times, corrupt signal. FramePro was a great tool for visualizing multi-threaded behavior.

To verify our fixes we measured the haptic actuator with an oscilloscope. As a software person that was a fun and new experience for me.

Tri-Modal Latency

Astute readers may have noticed four streams in my Nuendo screenshot. My primary goal was to measure and improve haptic latency. However our system is actually tri-modal. We care about haptics (glove), visual (VR display), and audio (headphones).

To measure all three modalities we recorded four synchronized streams.

- Table microphone to detect physical tap.

- Contact microphone to detect glove haptics.

- Microphone to detect Rift headphone audio.

- Photodiode to detect display change (black to white).

We also used a capacitive breadboard connected to USB as our physical tap target. This allowed us to grab a software timestamp. Which we used to measure the tracking system latency.

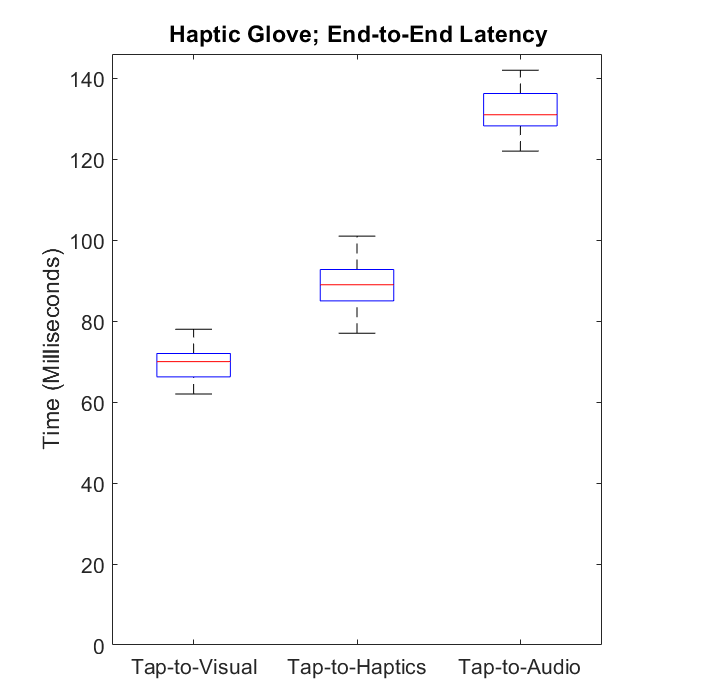

With this tooling we were able to improve performance and produce the following infographic.

At this point our block tower demo felt much, much better. The haptic feedback wasn't nearly as laggy and disconnected. Users felt impacts the same moment they saw them.

Success! Or was it? 🤔

Perceptual Simultaneity

The human brain is a curious thing. Humans are astonishingly good at building a mental model of the world based on multiple sources of sensory information.

But sometimes our senses can be deceiving. You may have noticed a problem in my previous infographic. Visual, haptic, and audio latency aren't the same. Haptic latency is sub-100 milliseconds. Audio is about 40 milliseconds slower.

During our improved demo someone asked if we could disable the audio. What happened next blew me away. The haptics felt radically more responsive.

I wish I could share this experience with you. With audio enabled the haptics felt good. With audio off haptics felt great. Not just better. Obviously better. We excitedly ran around the office grabbing people to see if the difference affected everyone. It did.

What's happening is that the brain is fusing multiple sensory signals into unified perceptual events. When you see, feel, and hear your hand tapping a table, your brain interprets the three sensory stimuli as a single impact event.

When one of those signals, such as sound, is slightly delayed then our perception of the other stimuli shifts with it because the sound “captures” the other stimuli. This causes us to perceive the haptic sensation to occur later than it actually occurred.

Here's where it gets weird and cool. This perceptual shift is automatic and involuntary. Knowing that the audio delay exists doesn't allow your brain to account for the delay and correct it. No matter how hard anyone focused on fingertip haptics, the delayed audio still caused haptics to feel less responsive.

Psychophysics

Now we have all sorts of interesting questions to ask. How much latency is too much? How synchronous do stimuli need to be? Is it more important to lower latency or increase synchrony?

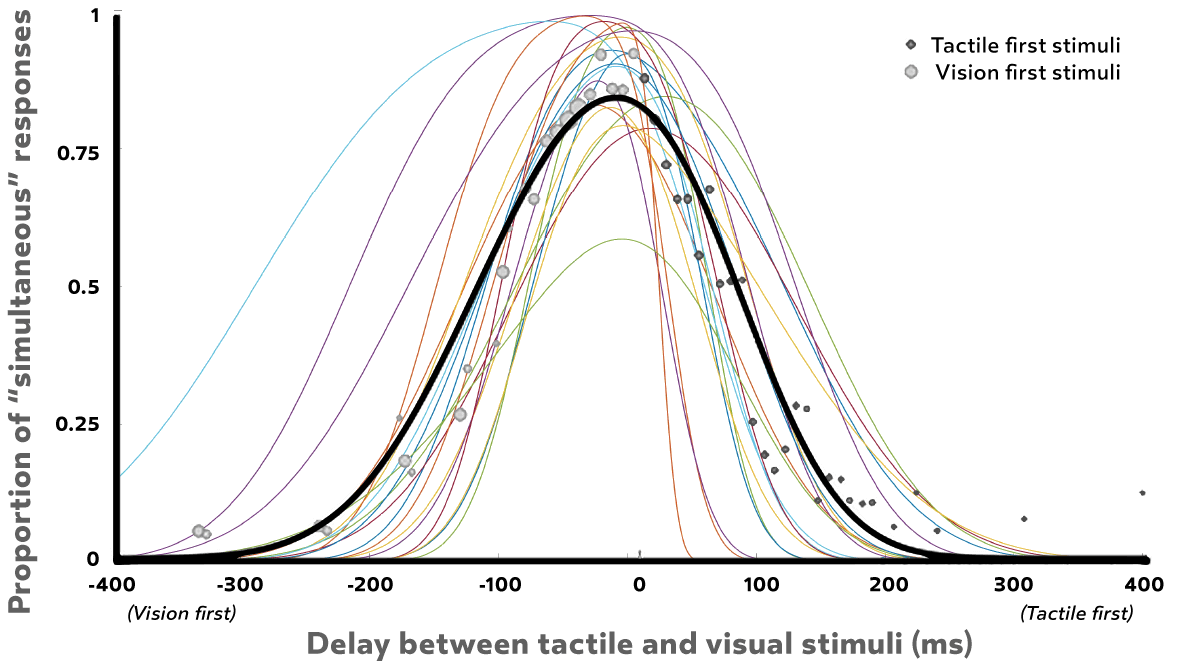

To start answering these questions we ran a psychophysical experiment. Recall for a moment the title of Max's paper; “Perceptual Limits of Visual-Haptic Simultaneity in Virtual Reality Interactions”.

Our first study was simple. We wanted to assess how much latency is allowed between a visual and a haptic stimulus for users to consider them simultaneous. This test was performed in virtual reality, with tracked and fully articulated hands. Participants performed a task to tap a virtual cube.

To simulate different latencies we wrote code to inject extra latency. We also wrote some simple prediction code that allowed us to trigger haptic stimuli early — even before the finger hit the surface. This was a way to simulate lower latencies than our system allowed. Our prediction code wouldn't work in a consumer product. But it works great in a controlled environment where you can tell participants exactly how to behave.

The majority of participants considered visual-tactile stimuli to be simultaneous if the haptic response was played less than 50 milliseconds after the visual response. In our glove demo the haptic response was only 20 milliseconds after visual. Well within bounds!

Interestingly, the stimuli were not considered simultaneous if the haptic response played more than 20 milliseconds before the visual response. This is a generous window of 70 milliseconds; but it's not centered at t=0.

For full methodology, analysis, and discussion please refer to Max's paper.

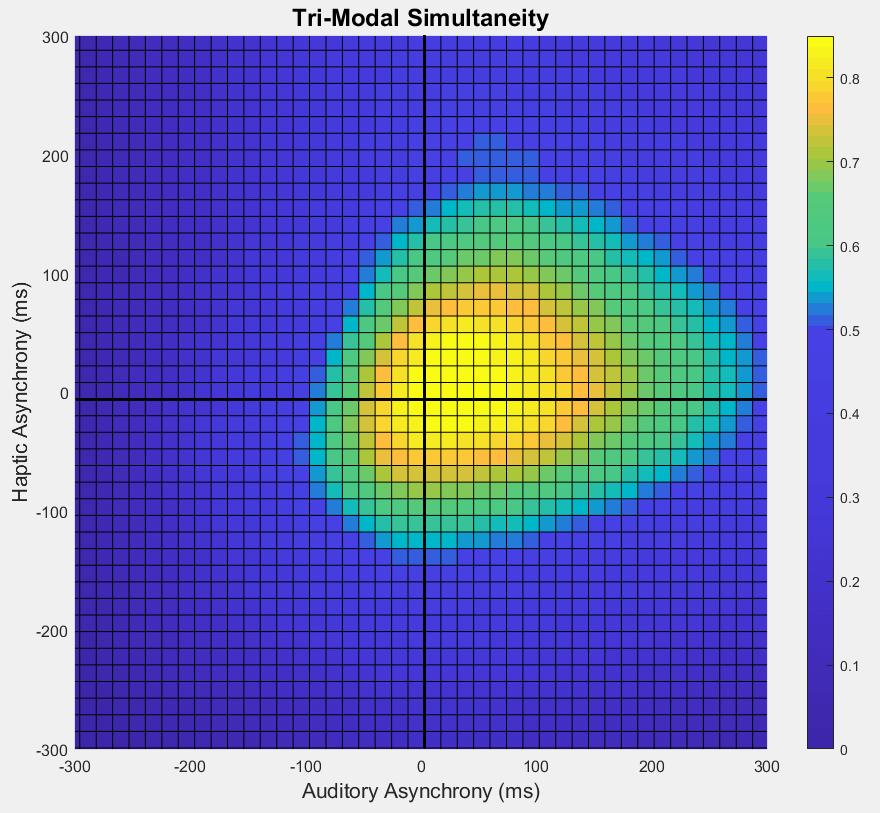

Tri-Modal Simultaneity

Our initial study focused on a simple two-stimuli test. Next, we ran a more complicated tri-modal simultaneity study with visuals, haptics, and sound.

This is a significantly more difficult problem to model and visualize. We have data, but analysis is still ongoing. Here are preliminary results.

This chart is very dense. Let's unpack it.

The origin represents the situation where all three stimuli happen at the exact same time. All other positions on the graph represent asynchronies relative to the time of the visual stimulus. The x-axis indicates the latency of audio stimuli relative to visual stimuli. Positive x-values mean that the sound played after the visual flash. Negative x-values mean that the sound played before the flash. The y-axis is similar, but for haptic stimuli. The color coding is what percent of participants rated the three stimuli as simultaneous.

Great. What does it mean? Two interesting things jump out at me.

First, the “hot zone” is not centered on the origin! Participants were very sensitive to haptic or audio stimuli that occurred before visual stimuli.

Second, the shape is more ellipsoid than spherical. Participants were more sensitive to haptic delay than to audio delay; asynchronies in that modality were more discernible. In contrast, audio delay tended to be more acceptable. This is in line with the previous description that audio “captured” haptics and could even make it feel delayed.

However it's too early to draw any strong conclusions. Our sample size was small and our initial experiment was very simple. We found that changing the type of interaction can have large effects on what asynchronies were deemed acceptable. Results are likely to change substantially with a different task or different types of stimuli. The questionnaire we posed to participants may not have captured the subtle gradient of “lag” that we experienced in our block tower demo.

Eventually we'd like to issue guidelines for creating compelling haptic user experiences. We aren't there yet. Our research into tri-modal simultaneity isn't complete. Some might say our journey is only 1% finished. We're off to a great start.

Final Thoughts

This concludes my tale for today. I got to work on a fun problem. We discovered something unexpected. And we learned a little bit more about the way in which human perception works.

Many thanks to all readers who made it to the end. I thoroughly enjoyed working on this project. I'm thankful for the opportunity to share it with you.

Facebook Reality Labs is Hiring

Are you interested in working on the future of augmented and virtual reality? If yes, then I have good news. We're hiring!

Facebook Redmond has over 200 job listings. We're hiring all kinds of software engineers - engine, graphics, tools, audio, eye tracking, SLAM tracking, compression, operating systems, and more. We're also hiring research scientists, technical program managers, electrical engineers, mechanical engineers, silicon, and more.

Interested? Learn more about what we've been up to at Facebook Reality Labs, visit tech.fb.com or contact us.