Learning Rust via Advent of Code

February 3, 2019

Learning Rust via Advent of Code

For years the games industry has looked at Rust as the promised land. The language that will save us from C++.

In 2018 I decided to finally take some time to learn Rust. I read the book; which was well organized and delightful. I attended Rust Conf in nearby Portland. Then I started dabbling at home. I don't know much, but I'm making progress.

Advent of Code

The Advent of Code is a programming competition organized by Eric Wastl. It's an advent calendar where every day he releases a new puzzle.

I decided to use the competition as means to learn Rust. I'm writing two blog posts. This post focuses primarily on my experience with Rust. My other post focuses on puzzle solutions and optimizations. If you'd like to read that post it's available here:

Solving Advent of Code in Under a Second

Advent Experience

Learning a new language via Advent of Code turned out to be a great idea. I highly recommend it.

People use every language under the sun to solve AoC puzzles. Python, JavaScript, C, C++, C#, Rust, Haskell, Clojure, Perl, Fortran, Lisp, Lua, Scala, OCaml, Ruby, Php, Swift, and more. Many folks were, like me, using AoC to learn something new.

The community is great. Thousands of programmers are working to solve the same problems. The subreddit has a solutions thread for every puzzle. If you get stuck you can ask questions or peek at other solutions.

Once you have a solution it's useful to see what other people did. I learned a lot of Rust tricks and new algorithms this way.

Rust Noob

I'm a total noob at Rust. I've written two or three thousand lines on toy projects. This post is not "how to write good Rust code". I don't know what I'm doing! This is about my trials, tribulations, and lessons learned.

My solutions and full project are linked below. Solutions for all 25 days are in one file so it's easy to skim.

Parsing Text is Hard

My first stumbling block was parsing. Almost every AoC problem starts with parsing lines of text from an input file.

String parsing in Rust is not easy. There's no random access into Rust Strings because they're UTF-8 encoded. The chars iterator is not very ergonomic. Using as_bytes helps only a little.

My first few attempts were complicated and inefficient. Then I learned how to use Rust's regex library by looking at BurntSushi's AoC solutions.

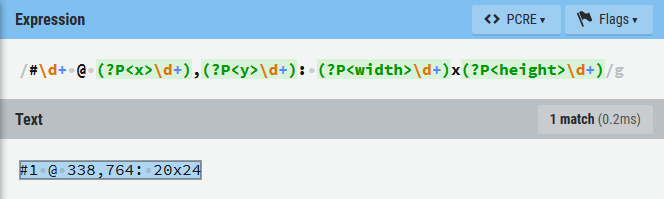

I'm not gonna lie. Using regex's feels like overkill. Consider the following line of input:

#1 @ 338,764: 20x24

Here's my matching Regex code.

Regex::new(r"#\d+ @ (?P<x>\d+),(?P<y>\d+): (?P<w>\d+)x(?P<h>\d+)").unwrap();

Is there a better expression? I don't know. Probably. I feel like there should be a helper for simple parse operations.

parse!("#{} @ {},{}: {}x{}", id, x, y, w, h);

This would be a clean inverse of println!. It would be great for simple cases.

Rexer

I'm not a regex expert. I'm not even an apprentice. I've always joked that regular expressions have the elusive write-once flag. Once you finish writing one you'll never understand what it does ever again.

To help write my parsing regexes I used the free website Regexr. It works well for the Regex crate which uses PCRE-like syntax.

Using an interactive webtool was pleasant. It is far faster than having to compile and run Rust code.

Iterators

My background is C++. In C++ an "iterator" often boils down to a raw pointer. Rust iterators are lazy, composable, and impressively powerful.

I spent a fair amount of time referencing iterator API documentation. I'm still learning what features are available and how to use them. I had a lot of fun trying to be clever.

Enumerate

I love enumerate. It provides a way to use iterators but still have an iteration count.

let mut values : Vec<usize> = vec![3,5,8,13,21]; for (i, value) in values.iter_mut().enumerate() { *value *= i; } assert_eq!(values, [0,5,16,39,84]);

Custom Iterators

Multiple puzzles involved grids and neighbor cells. It was common to perform some action for each neighbor. Iterating neighbors requires checking for borders and possibly neighbor value.

I wrote my own AdjacencyIterator to iterate neighbors and perform border checks. It was super simple. Way easier than writing a C++ STL-compatible iterator.

Rust isn't quite as nice or flexible as C# IEnumerable. Maybe someday?

Expressiveness

Adjacency iterators plus built-in features enabled some delightfully expressive code. Consider the following:

let slot = units .iter() .filter(|unit| self.is_enemy(u)) // ignore non-enemies .flat_map(|unit| unit.pos.adjacent_positions(w,h)) // iterate adjacent positions .filter(|pos| !walls[pos] && !occupied[pos]) // ignore walls or occupied spaces .min_by_key(|pos| (self.pos - pos).length_sq()); // pick closest slot

That code will find the closest unoccupied position that is adjacent to an enemy. If I had written that in C++ it might look like this:

// track best int best_distance_sq = std::numeric_limits<int>::max(); Vec2i best_slot; // iterate units for (size_t i = 0; i < units.size(); ++i) { Unit const& unit = units[i]; // ignore non-enemies if (this->is_enemy(unit) { // iterate adjacent positions static constexpr std::array<Vec2i> offsets = {{-1,0}, {1,0}, {0,-1}, {0,1}}; for (size_t j = 0; j < offsets.size(); ++j) { Vec2 pos = unit.pos + offset; // check bounds if (pos.x >= 0 && pos.x < width && pos.y >= 0 && pos.y < height) { // ignore walls and occupied spaces if (!walls[pos] && !occupied[pos]) { // pick closest slot int dist_sq = (this->pos - pos).length_sq(); if (dist_sq < best_distance_sq) { best_distance_sq = dist_sq; best_slot = pos; } } } } } }

Those blocks of code will produce the exact same result. In theory, they should have the same performance.

Remember, Rust iterators are lazy. The function filter doesn't process every element immediately. It creates a struct that stores a closure. That closure only executes when the iterator function next is called. Which doesn't happen until min_by_key.

I tried to produce a Godbolt example to compare the assembly generated. Unfortunately it requires a lot of boilerplate. The output is too long to reasonably compare.

Writing idiomatic Rust code seems to require trusting the compiler. This is especially true for closure-based iterators. I don't love this. I've already hit instances where I needed to "unroll" simple iterator code to improve performance.

Assorted Goodies

I used and enjoyed quite a few iterator built-ins. Here's a brief summary of what I used for AoC.

- collect - transform iterator into a collection; such as a Vec

- count - counts iterations to reach end

- cycle - creats iterator that loops

- enumerate - creates iterator that returns tuple of iteration count and value

- filter - uses closure to yield values

- find - searches for value

- flat_map - map plus flatten

- fold - applies function to produce a single value

- for_each - calls closure for each value

- map - transforms each value into another

- max - returns largest value in iteration

- max_by - returns largest value using provided comparison function

- min_by_key - returns value given a function

- sum - returns sum of iterator values

Compilation Woes

I had to unroll some iterators just to compile. Consider the following:

dungeon.tiles

.iter()

.filter(|tile| !visited.contains(tile))

.for_each(|tile| {

do_thing(tile);

visited.insert(tile);

});

This fails to compile due to the borrow checker.

The problem is that filter immutably captures visited and for_each mutably captures it. The Rust borrow checker does not allow this.

We could move .contains(tile) into for_each and it'd compile. That may even be preferable. What's interesting, and often frustrating, is that naive "unrolling" will compile while iterators do not.

Profiling

The Rust profiling story is mixed. It could be worse. It could be a lot better.

Sampling

I'm a big fan of sampling profilers. Everyone should have a few in their toolkit. A good sampling profiler can give actionable information in four mouse clicks.

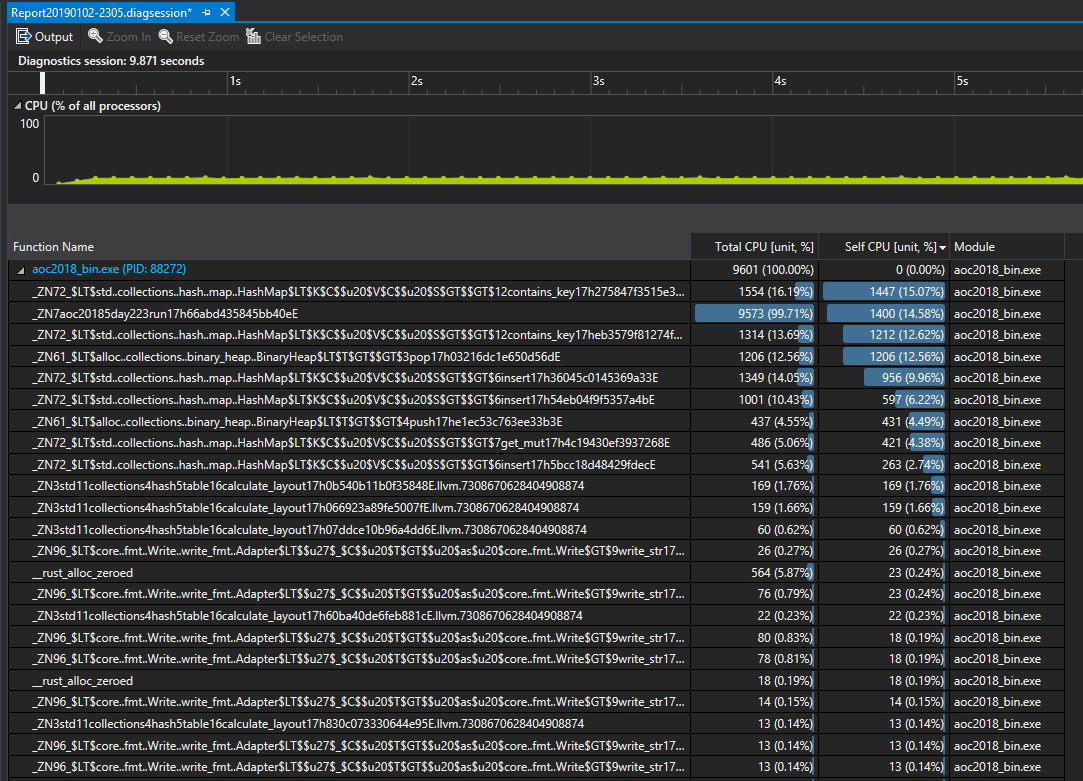

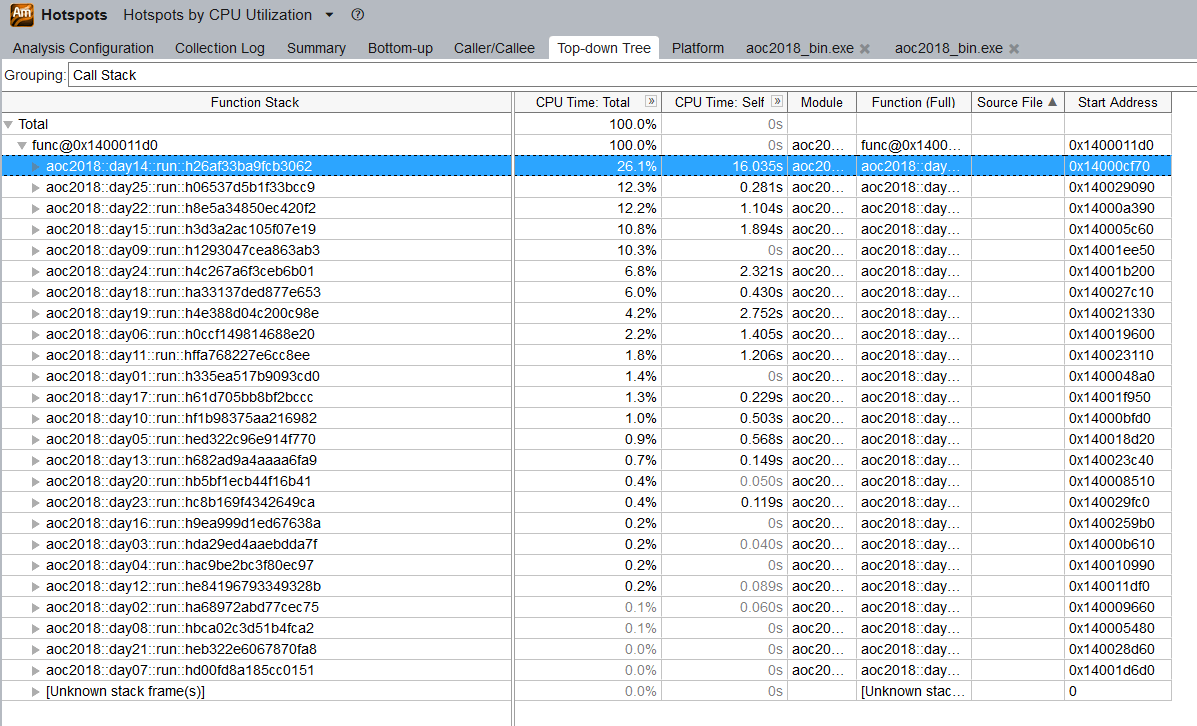

Sampling in Rust works well enough. It'd be better if the function names were demangled.

I tried, and was pleasantly surprised by, Visual Studio's analyzer.

VTune also worked.

I couldn't figure out how to map source files. Screenshots leads me to believe it's possible. I don't know how. :(

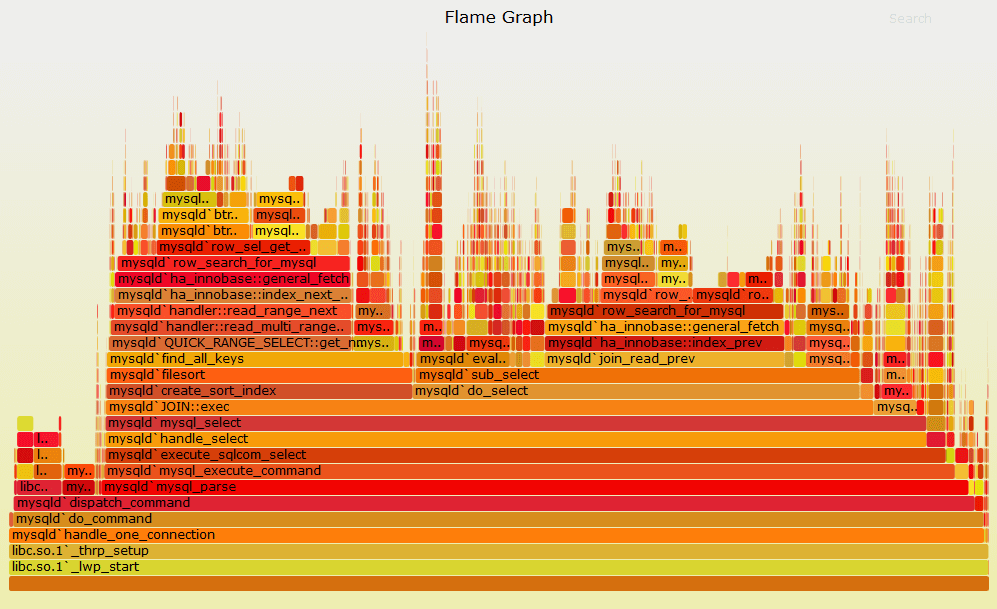

Flamegraphs

I have mixed opinions on flamegraphs. They're a nice visualization of sampling data. Width of each node is a proportional to total time spent. I often prefer the call tree. Your mileage may vary.

Source: Brendan Gregg

The most recommended Rust profiling tool is the flame crate. The flamer crate provides convenience macros.

Flame is a weird crate. It produces flamegraphs, but requires manual instrumentation. It doesn't offer a timeline or thread based view. I tried to instrument some inner-loops and it produced a 28 megabyte HTML file that wouldn't load. It's kinda the worst of both worlds.

I ended up using manual timers. :(

Instrumention

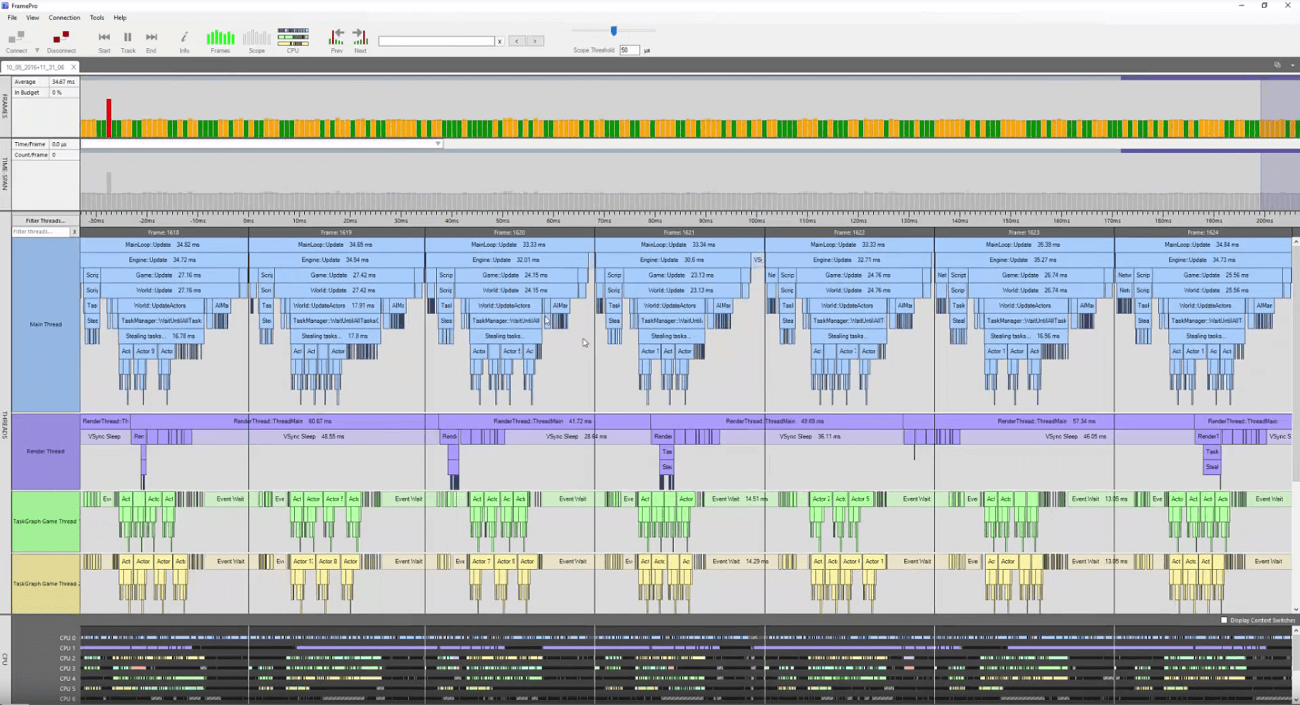

Rust has a big hole in its profiling story.

Flamegraphs are an important tool, but they aren't nearly enough. They aren't real time. They don't provide multithreading information. They expose a very limited type of data. They can't help with frame spikes. Webtools are janky and slow.

I'd love to see Rust get some professional grade profiling tools like Telemetry or FramePro.

Source: FramePro

C/C++ has many robust profiling options. I've used Remotery and it works well. I found several more options with a few minutes of Googling; superluminal, microprofile, easy profiler, and brofiler.

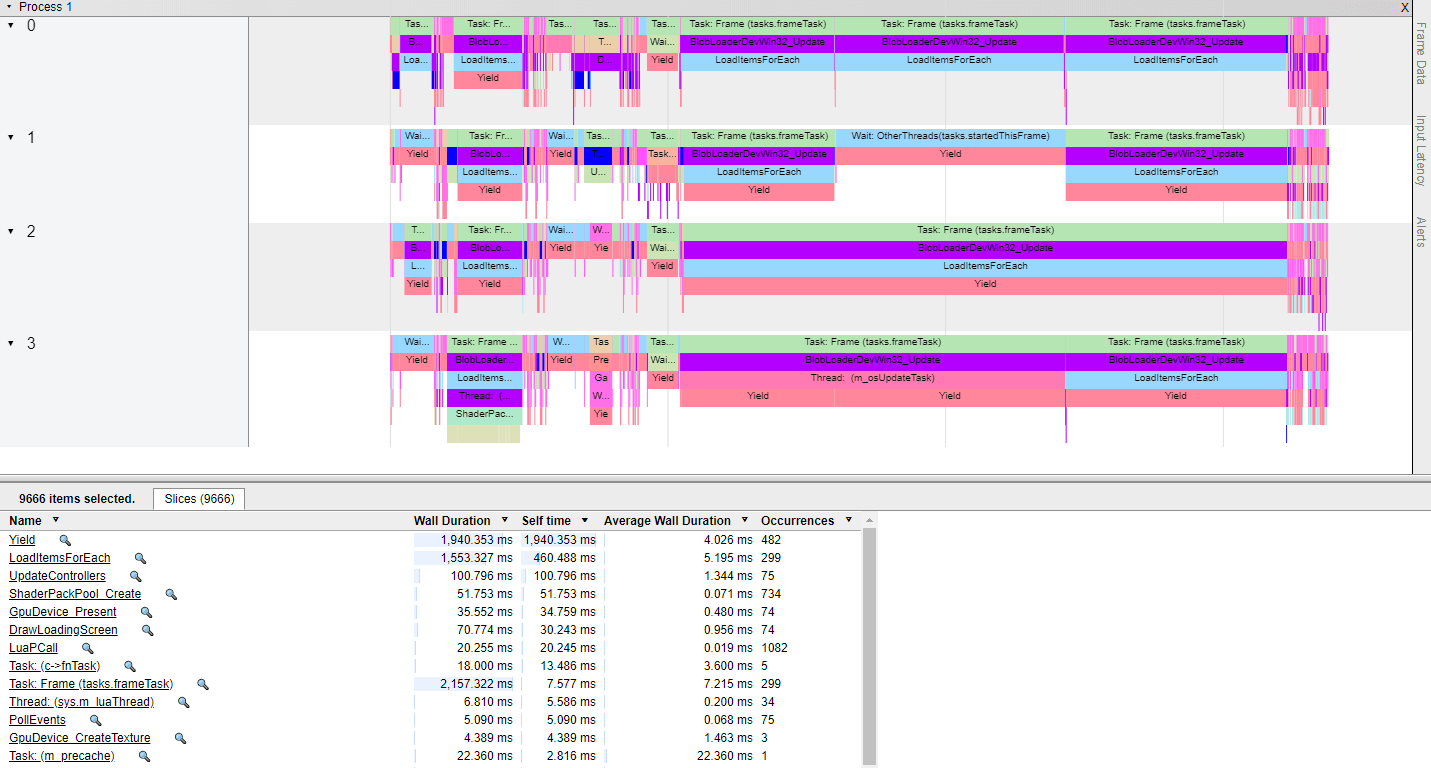

Chrome Trace via minitrace is another option.

Source: Brooke Hodgman

The lack of a good instrumented CPU profiler is a huge hole in the Rust ecosystem. It's also a huge opportunity.

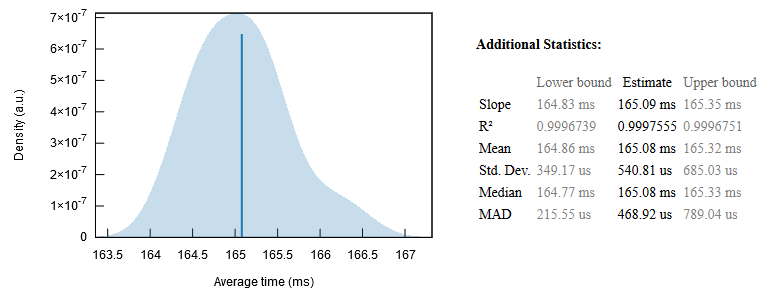

Benchmarking

Rust's benchmarking story is mixed.

The goto crate is Criterion. I had a lot of trouble with it.

My full solution set takes less than one-second to run. Criterion claims to be a "statistics-driven micro-benchmarking" library. Micro appears to be quite literal! Code that takes hundreds of milliseconds may be out of scope.

I attempted to let Criterion run overnight with default settings. It completed benchmarks for only 15 of 25 days in ~12 hours. 🤷

Part of the problem is poor documentation. Functions are documented. How to use them is not. The user guide is insufficient.

I couldn't figure out how to even call sample_size. I had to search GitHub to find a working example. I tried to set measurement_time. It took more than an order of magnitude longer than specified.

Criterion produces some pretty charts via gnuplot. It has a lot of nice features. I was unable to effectively use the tool.

Multithreading

One of Rust's mottos is fearless concurrency. For AoC I chose to focus on single-threaded performance.

I've heard so much about Rayon I couldn't resist giving it a try. In 10 minutes I had naive multithreading working. On my 6-core/12-thread Intel i8700k it cut my total run-time from ~750ms to 200ms. Color me impressed!

That was naively running each puzzle in parallel. Running all solutions 100x it worked out to about 100ms on average. Not bad.

Proper multithreading requires a lot more than sprinkling par_iter() here and there. But for it to have worked so easily is a little mind blowing. I've had horrible experiences trying to parallelize pre-existing C++. Rayon was a breath of fresh air.

Optimization

I spent a fair amount of time on optimization. Some of it algorithmic and some Rust specific. For further details please see my other post:

Solving Advent of Code in Under a Second

Assorted Thoughts

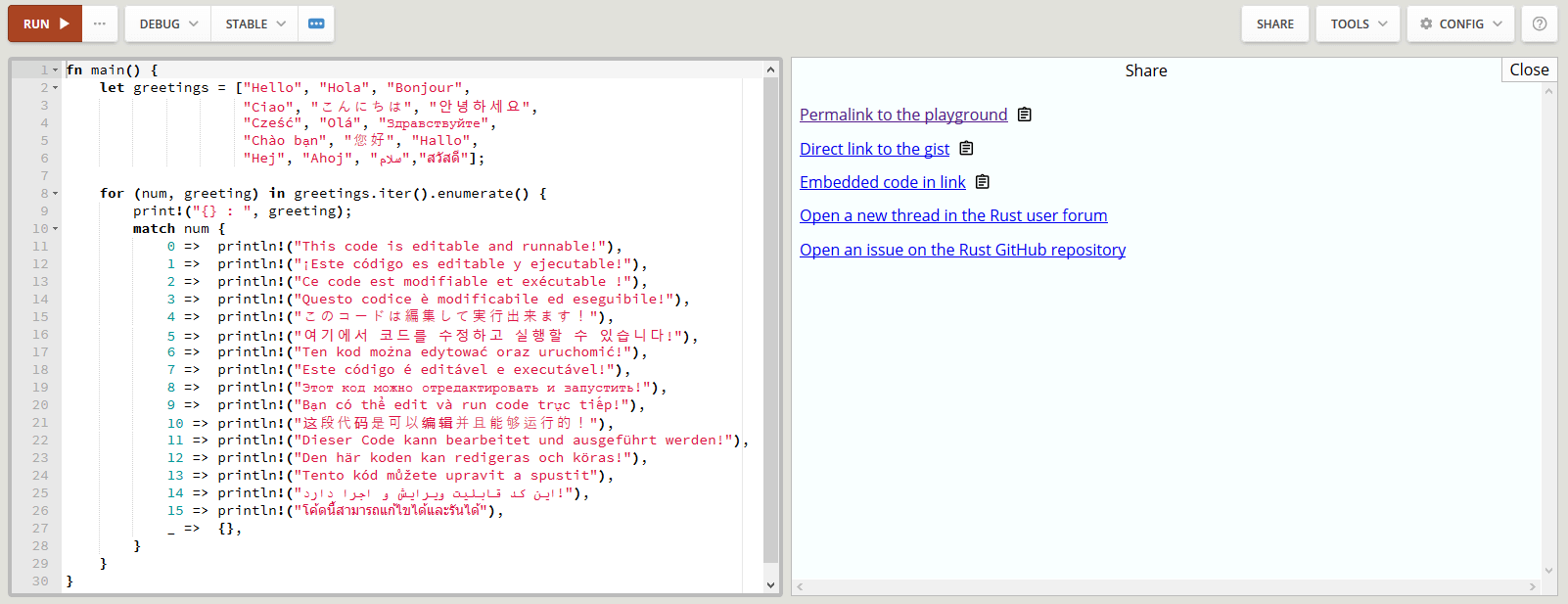

Rust Playground

The Rust Playground is great. I used it to quickly test questions. For example, what is the std::mem::size_of result for various structs?

It's also great for creating snippets to share in the r/rust newbie thread. Which I did on more than a few occassions.

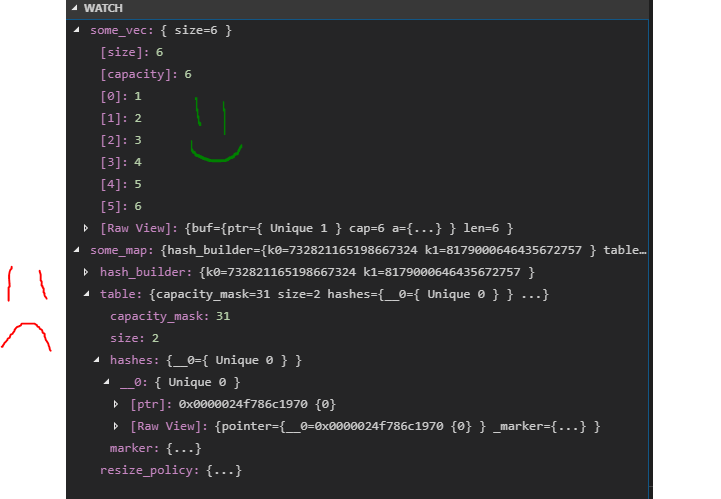

Debugging

Rust's debugging story is "needs improvement".

Visual Studio Code is pretty easy to configure as a debugger. Step debugging actually works. So does the watch window.

Unfortunately most containers aren't inspectable. Vec works because it's basically an array. HashMap and HashSet are inscrutable.

Visual Studio has natvis for C++. It's not great. I have a lot of complaints. It's way better than Rust's nothing.

I'm hoping Rust 2019 lays the groundwork for improved IDE and debugging in 2020.

Bit Packing

Option<bool> is only one byte. Option is also one byte for an enum when the enum value doesn't need all the bits. This was a pleasant surprise.

Implicit Casts

Rust doesn't have implicit casts. If you want to pass a u32 to a function that needs a usize you have to explicitly cast it.

I think I like this. It leads to annoyingly verbose code. But it did prevent at least a couple of bugs.

Inference

Rust is statically typed. However it has robust support for type inference.

let elem = 5u8; let mut vec = Vec::new(); vec.push(elem); vec.push(6u32); // error: expected u8, found u32

The type of vec is inferred to be Vec<u8>. You can leave off a shocking amount of type information. For better or worse.

rustfmt

I enabled rustfmt for auto-formatting. I like it 95% of the time. For AoC I'm jealous of the brevity of Python. Rust is quite verbose, and rustfmt makes it a little worse.

Rustfmt badly needs a clang-format off feature for block level control.

Helper Lambdas

I like lambdas in C++11. I use them regularly for small helpers that exist solely within a function.

This is hard to do in Rust. It causes borrow checker errors similar to iterator closure issue described above.

What make it frustrating is that an uncompilable helper lambda will often work perfectly if changed to a macro. That feels like a bug to me. This case isn't quite mentioned in a recent post on non-lexical lifetimes. I think it should be.

HashMap Initialization

I had a hard time initializing HashMap. Here's what I'd like to do:

let allowable_gear : HashMap<(TileType,TileType), &[Gear]> = { (Rocky, Rocky) = &[Climbing, Torch], (Rocky, Wet) = &[Climbing], (Wet, Narrow) = &[Neither], };

This is a HashMap that maps a pair of TileTypes to an array of Gear. This relationship is constant and known at compile time. I couldn't find a clean, idiomatic way to do this. :(

BinaryHeap

The standard library provides a max-heap. I regularly needed a min-heap. I got one by making a custom struct with custom compare function.

This was a bit of a pain. It could have been easier. Priority queues with customizable compare functions seems like a useful thing that would exist. I'm probably doing it wrong?

Rc<RefCell<Foo>>

I've barely touched Rc and RefCell. For day23 I wrote an Octree and it used an Rc<RefCell<OctreeNode>>. I wanted to typedef that to OctreeNodePtr. Except that didn't work for creating new values.

Graphs are notoriously difficult in Rust. I'm pretty sure I made my Octree incorrectly. But I'm not sure what I should have done instead. Suggestions welcome!

Sorting

For several puzzles I sorted a struct based on sequential fields. For example:

dates.sort_by(|a,b| { if a.year != b.year { a.year.cmp(&b.year) } else if a.month != b.month { a.month.cmp(&b.month) } else if a.day != b.day { a.day.cmp(&b.day) } else if a.hour != b.hour { a.hour.cmp(&b.hour) } else { a.minute.cmp(&b.minute) } });

I wonder if there is a nice macro crate to help with this? I'd love to write:

compare!(a, b, year, month, day, hour, minute);

My sort for day23 was more complex. It sorted by foo.max_possible then foo.bounds.volume() then foo.bounds.distance_from(origin). Here's one idea for a useful sort API.

foos.sort_by(|v| v.max_possible) .then_by(|v| v.bounds()) .then_by(|v| v.bounds.distance_from(origin)) .sort();

That would be great. That API design feels pretty close to right. What do you think?

Boilerplate

I chose to write a lot of boilerplate in my solutions. I wanted to keep all my code in one place for each puzzle. I didn't want to use ambiguous tuples of unnamed types.

I wrote struct Pos for 8 out of 25 days. In i8, i16, and i32 flavors. I probably should have used cgmath or nalgebra. One my side projects is a Rust crate for video game 3d math. Maybe it'll be done by Advent of Code 2019.

Build Profiles

Cargo doesn't have custom build profiles. This blows my mind.

The included profiles are dev, release, bench, test, and doc. Only dev and release are used for running a program. Historically I've run with debug, internal, release, and retail.

There is a blog post and tracking issue that lead me to believe custom profiles this will happen someday. It may be awhile.

Link-Time Optimization

Release builds set link-time optimization to false by default. This is the correct choice due to build times.

Enabling LTO and changing codegen units reduced my run-time from 700ms to 600ms. That's 1.17x times faster and all I did was change compiler flags! More details available in other post.

When Rust ships custom build profiles I'd love to see a MaxSpeed config. RipGrep doesn't ship binaries with LTO. I think the lack of custom profiles is causing a lot of shipped Rust code to leave free performance on the table.

New Primitive Types

I wish Rust provided u24 and i24 types. They're useful in a few scenarios. They already exist inside various audio crates. I couldn't find a robut standalone implementation.

I wish there was an f16 half-precision float. There's a good internals thread on minifloats that makes me think f16 will happen eventually.

Crates

I used a handful of crates. Here's a brief list.

- criterion - Statistics-driven microbenchmarking

- derive_more - Add ability to derive common traits

- easycurses - Wrapper crate to pancurses which wraps ncurses (Unix) and pdcurses (Windows). Used for rendering flicker-free console output.

- flame - instrumented flamegraphs

- flamer - macros to simplify flame instrumentation

- fnv - Fowler–Noll–Vo based HashMap and HashSet

- hashbrown - Super fast HashMap/HashSet based on Google's SwissTable.

- itertools - Extra iterator tools. I like quite iproduct! and minmax.

- lazy_static - Macro to lazily evaluate static variables

- rayon - Data-parallelism library

- regex - Regular expressions

I have not tried the parsing libraries nom or pest. I wonder if they would have made parsing day 24 easy? I'd like to investigate them in the future.

Crate Discovery

Crate discovery is non-existent. GitHub stars is not a useful metric. Downloads on crates.io is ok but not great. I looked for some replacements for std::collections containers. It was hard to tell which crates were worth trying.

I got a huge speed-up when I replaced the built-in HashMap with an FnvHashMap. Through dumb luck I found Hashbrown, which is even faster.

There needs to be a better way to find crates. This is a hard problem.

Clippy

Clippy is an easy to use linter to catch common issues.

I hit and fixed ~25 lints. They were minor issues or recommended improvements. However I did have to disable a few lints.

needless_range_loop

Bad lint. I iterated 0..3 to grab components out of multiple Vec2 structs. My struct lacked iterators. I could have implemented an iterator and used zip. A simple for-loop is better.

too_many_arguments

Generally true. I feel my use case was appropriate.

map_entry

Bad lint. It was straight wrong.

For day22 I had a HashMap of tiles. Each tile had a cost that was dependent on tiles up and to the left. I wrote this recursively.

fn get_tile(&mut self, pos: pos) -> &mut Tile { if !self.tiles.contains_key(&pos) { let new_value = compute_value(self.get_tile(left), self.get_tile(up)); self.tiles.insert(pos, new_value); } self.tiles.get_mut(&pos) }

The lint recommended version is:

fn get_tile(&mut self, pos: pos) -> &mut Tile { self.tiles .entry(&pos) .or_insert_with(|| { let new_value = compute_value(self.get_tile(left), self.get_tile(up)); self.tiles.insert(pos, new_value); }); }

This doesn't compile. entry stores a reference to tiles. Then or_insert_with creates a closure with a mutable reference to tiles for recursion. That's not allowed.

My recursive pattern isn't common. I'm not surprised Clippy can't handle it. It's a shame because I really like the entry API with or_insert and or_insert_with.

Lint Control

Clippy needs a way to disable lints for individual functions, blocks, or lines of code. I don't want to turn these lints off everywhere. This is the same issue as wanting clang format off for rustfmt.

Sentinels

One feature I wish Rust had was a helper struct for sentinel values. Consider an 8-bit value where you want to special case 255 to mean "no value". Or an i32 where -1 means "no value". This is pretty common.

One Rusty, ahem, option would be an Option; Option<u8>. Another might be an enum; enum EFoo { None, Foo(u8) }.

Both these approaches use two bytes of storage. One byte for the u8, and another for a flag. An Option<u32> bloats to a whopping 8 bytes. An Option<u64> is, wait for it, 16 bytes.

What I want is a struct that is stored as the underlying type and has a getter which returns an Option<T>. The getter returns None if the inner value is a specific value.

This feature may not be possible today. It might be when when const generics land.

There is NonZeroU32. It's similar-ish, but only works for zero. There isn't a Non255U8 or, thankfully, Non4294967296U32.

Final Thoughts

I used Advent of Code as a means to learn Rust. This was a great idea and one I strongly recommend. AoC is a great way to learn any language.

I won't claim to know Rust. But I do know a lot more today than I did before. Progress is clear success in my book.

Thanks for reading.

Links

Solving Advent of Code in Under a Second

Source Code

Project